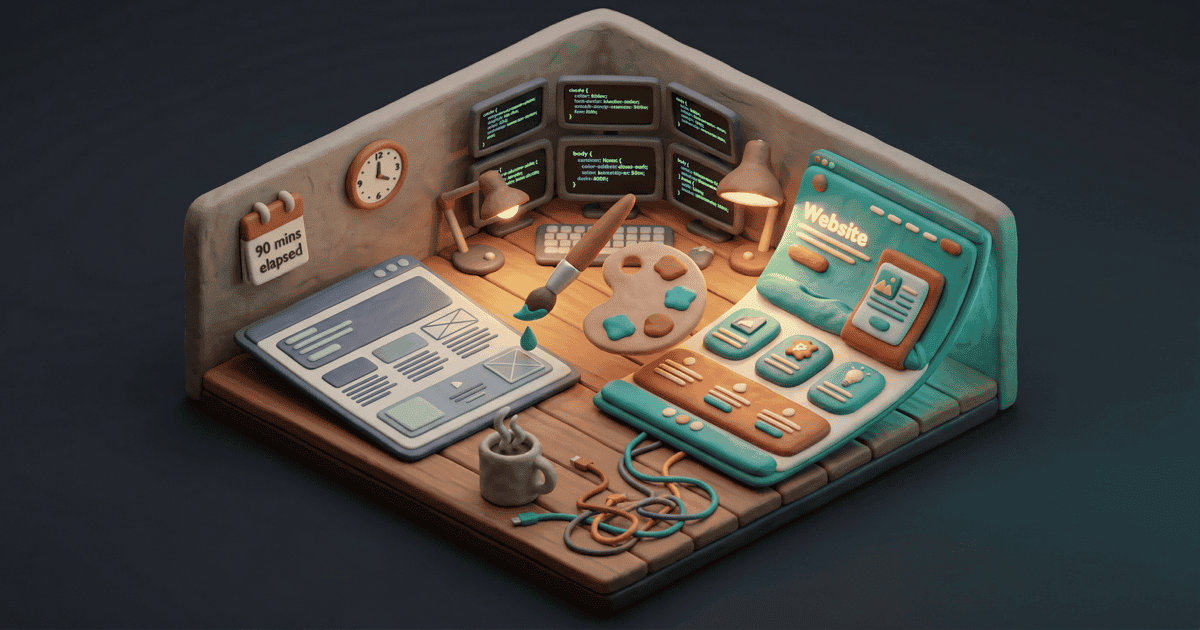

Tomorrow Signal Over Noise goes paid. The site needed to look different — not just “updated,” but visually part of a family with jimchristian.net and this site. Same claymorphic 3D aesthetic, different palette. Shared teal (#1B9AAA) tying everything together, burnt orange (#EF6351) as SoN’s signature accent.

I gave Claude the plan at 1:30 PM. By 3:00 PM the site was deployed. Here’s what actually happened in those 90 minutes — including the part where I nearly generated every image in the wrong style.

CSS First

Replaced 20+ colour variables in global.css. Swapped the shadow system to claymorphic (dual shadows — dark outer for depth, light inner for the “pressed into clay” effect). Changed buttons from square corners to pill shapes. Backgrounds from flat white to warm cream.

Then bulk-replaced every hardcoded rgba(224, 123, 79 — the old coral accent — across six page files. Inline styles, hover states, gradient definitions. One search-and-replace pass caught them all. One build command. Zero errors.

The Near Miss

I was about to fire off hero image prompts when I checked aesthetic.md — the global config that governs image generation across every project. It still described hand-drawn sketches on a cream background. The old SoN aesthetic.

What I almost generated: Flat line drawings with hand-drawn textures. Cream background, pencil-sketch style. The previous SoN look.

What I actually needed: 3D claymorphic dioramas. Soft polymer clay, isometric perspective, warm lighting. The new family aesthetic.

If I hadn’t caught it, every image would have been flat illustrations with claymorphic colours — a hybrid that matched nothing. CSS saying “3D clay buttons,” images saying “pencil sketch.” I’d have noticed immediately, but I’d have burned 20 minutes generating images I’d throw away.

The thing is, I almost didn’t check. The prompts were ready. Something — paranoia, the memory of a previous session where I’d wasted half an hour on bad generations — made me open the global config first. Just to see. And there it was, ready to cascade through every image.

Five-second decision, twenty minutes saved. But it only happened because I’d been burned before. There’s no system for it yet. Just scar tissue.

Rewrote the entire aesthetic file before generating anything.

The Layered Config Problem

This is the most transferable thing in the whole session. The image generation system has three layers:

| Layer | File | Controls |

|---|---|---|

| 1. Global aesthetic | aesthetic.md | Default style for ALL images |

| 2. Site template | HERO-IMAGE-TEMPLATE.md | Overrides for a specific project |

| 3. Per-image prompt | The actual /art command | One-off generation instruction |

I’d created layer 2 with all the right claymorphic specs. But layer 1 still said “hand-drawn sketch.” The template referenced the global aesthetic for base parameters. Without my catching it, the skill would have read layer 1 first, seen “sketch style,” and generated flat illustrations with claymorphic colours layered on top.

Same failure mode as CSS specificity (you change a class rule but an ID rule upstream overrides it) or Docker compose overrides (you edit the override but the base contradicts it). I changed one layer and didn’t check what depended on it.

This is genuinely dangerous because every layer looks right on its own. The global aesthetic said “hand-drawn sketch” — correct, that was the style. The site template said “claymorphic 3D” — correct, that is the new style. Both pass review individually. The conflict only exists at runtime, when the system reads them in sequence and the first one wins.

What the aesthetic.md looked like before and after

Before (hand-drawn sketch style):

Style: Hand-drawn editorial illustrations

Background: Cream/off-white (#F5F0E8)

Line work: Confident ink strokes, slightly imperfect

Colour: Muted earth tones with one accent pop

Feel: New Yorker illustration meets technical diagramAfter (claymorphic 3D):

Style: Isometric 3D claymorphic dioramas

Background: Warm cream (#FFF8F0) for SoN, dark charcoal (#1E1E2E) for SBC

Material: Soft polymer clay, rounded pillow-like edges

Lighting: Warm directional with soft ambient fill

Colour: Teal primary (#1B9AAA), burnt orange accent (#EF6351)

Feel: Blender cycles render, matte clay texturesEight Images in Twenty Minutes

Homepage, newsletter, services, about, insights, OG image, LinkedIn banner, newsletter masthead. All through Gemini Flash image generation. Each came out at ~6MB raw, compressed with pngquant to 1.5-1.8MB. Platform-specific images resized with sips to exact pixel specs — Kit masthead at 1200x298, LinkedIn banner at 1128x191.

Generation was fast. Writing the prompts was the bottleneck — getting the clay texture right, keeping the isometric perspective consistent, colour coherence across images. Each prompt was 4-5 lines of specific art direction. (I wrote up the full visual content system separately — how the art skill works, model routing, compression pipeline.)

Six Articles and Two Factual Errors

No new content since February 12th. A newsletter-researcher agent pulled 12 AI news items from the past three weeks. I picked 6, wrote them up, ran each through a draft-reviewer that stripped section headers, tightened phrasing, and killed redundancy.

Then I realised none of the articles had source hyperlinks. Every claim was unsourced. A research agent went back and found the actual URLs.

And caught two factual errors in the process:

Error 1: The Microsoft Copilot adoption figure was written as 3.3%. The actual figure from the source was 3%.

Error 2: Microsoft’s MCP governance guidance was dated February 12th. It was actually published February 26th.

To make this concrete:

What the draft said: “Microsoft reported that only 3.3% of enterprises have broadly deployed Copilot beyond pilot programs.”

What the source actually said: “…only about 3% of enterprises have moved Copilot beyond initial pilots into broad deployment.”

3% vs 3.3% sounds trivial. But 3.3% is false precision — the source never claimed that specificity. And once there’s a hyperlink next to it, the mismatch is one click away. Small errors erode trust faster than big ones.

Both errors were plausible. Neither was caught by the draft-reviewer — they were caught by the verification step of finding the source URLs. The act of sourcing the claims is what revealed the claims were wrong.

Sourced hyperlinks are now mandatory in the insight workflow. The URL gets found before the sentence gets written, not retrofitted after.

The Numbers

| Metric | Value |

|---|---|

| Total time | ~90 minutes |

| Time building (CSS, images, content) | ~55 minutes |

| Time verifying and fixing | ~35 minutes |

| CSS variables changed | 20+ |

| Pages updated | 10 |

| Images generated | 8 |

| Articles written | 6 |

| Factual errors caught | 2 |

| Deploys | 2 |

| Git commits | 2 |

Roughly 60/40 building to verifying. I expected 80/20. But checking configs, sourcing URLs, and catching errors the draft-reviewer missed accounted for more than a third of the session. And that third is the only reason the output was usable.

What I’d Do Differently

The source hyperlink problem shouldn’t have been a post-hoc fix. Write around the sourced claims, not the other way around. The process being backwards created a window where unsourced claims existed in “finished” content — and two of them were wrong.

The layered config near-miss is harder to prevent. You’d need a pre-generation check that reads all three layers and flags conflicts. That check doesn’t exist yet. I caught it by instinct, not by process.

The site went from the old look to the new look in under two hours. Whether that’s impressive or terrifying depends on whether you noticed the part where two facts were wrong and the images almost came out in the wrong style.

The building is fast. The verification is what I’m still figuring out.

Ninety minutes, start to deployed. But only because thirty-five of those minutes were me not trusting the output.