The Problem

Manual QA is the tax nobody budgets for. You build the thing, you ship the thing, and then you spend an afternoon clicking through every flow yourself because “who else is going to do it?” For a chatbot with branching conversations and form validation and session persistence, that’s a full day of tedious work.

This week’s experiment: what if no human touched the testing at all?

What I Tried

I set up a three-AI pipeline to run full UAT on the Discover chatbot — a conversational tool that generates a personalized Business DNA Brief for solopreneurs.

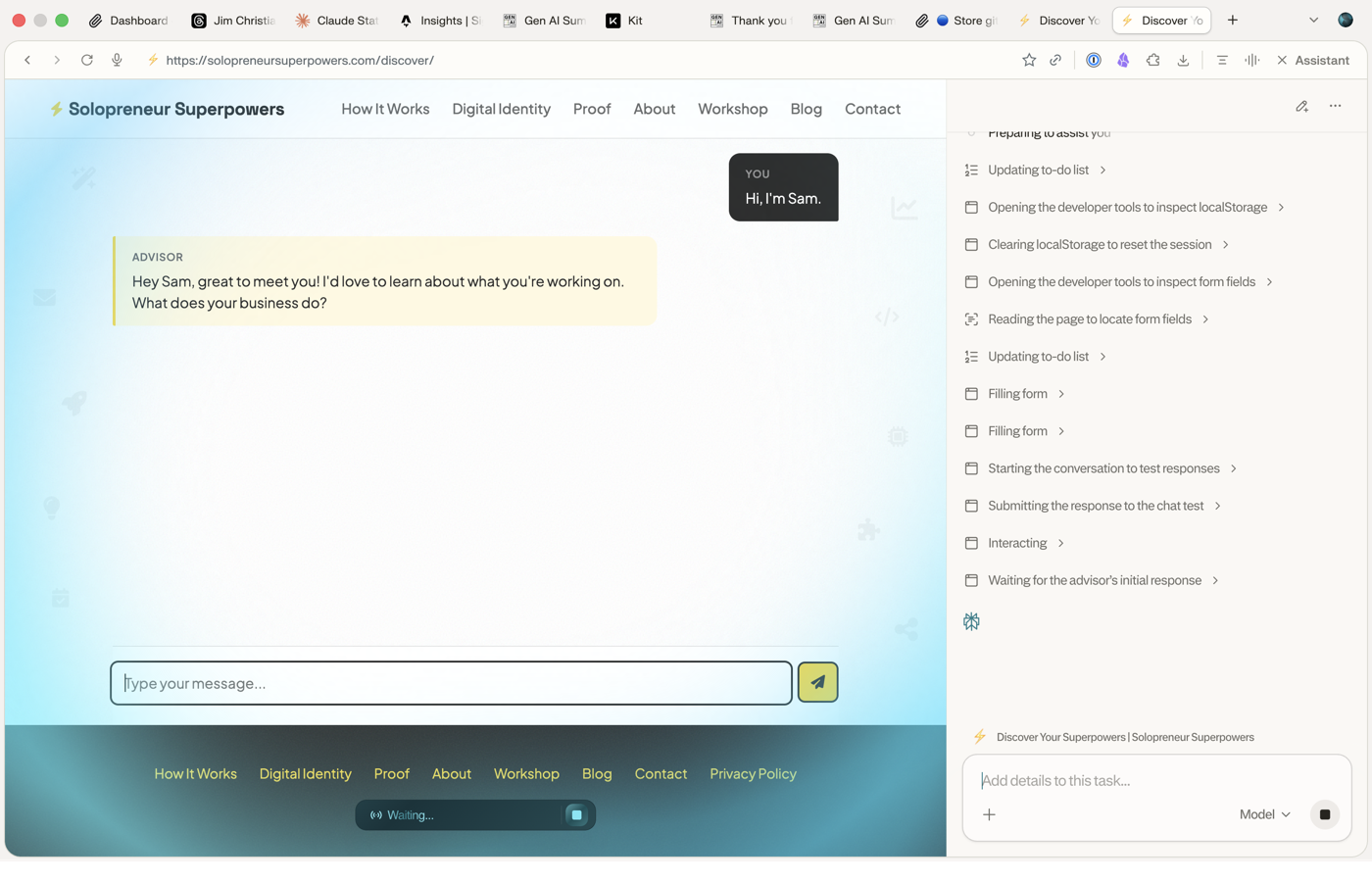

The pipeline: Claude (Opus) wrote five test scenarios as structured markdown scripts. Perplexity reviewed the first script and validated the approach. Then Comet — Perplexity’s browser automation agent — executed all five tests autonomously against the live production site.

The five scenarios: happy path freelancer, minimum viable terse answers, established business owner, session restore after page reload, and edge cases (empty form, invalid email, long messages, rapid-fire submissions).

Comet inspecting localStorage, clearing session state, and running through the conversation flow — no human driving.

Comet inspecting localStorage, clearing session state, and running through the conversation flow — no human driving.

What Actually Happened

Comet found a real bug. Rapid-fire messages were concatenating in the textarea because the input wasn’t disabled while a response was pending. That’s a bug I would’ve missed manually because I don’t type like an impatient chaos agent. Comet does.

It also documented a brief generation stall — four minutes with no timeout — though that was against older code before the timeout handler shipped. The rotating progress labels during generation? Comet called them “a nice UX touch.” Even the robot liked the UX.

Claude fixed the bugs, redeployed, and Comet re-ran the failing tests to confirm. Full loop, no human in the execution path.

The Deeper Thing

The human role didn’t disappear — it shifted. I went from “person clicking buttons” to “test director” reviewing structured pass/fail reports and deciding what to fix first. The test scripts are reusable markdown, so regression testing is now a re-run, not a re-do.

What I’m noticing: the leverage isn’t in any single AI being good at testing. It’s in the handoff protocol between them. Claude writes specs Comet can execute. Comet produces reports Claude can act on. The format is the API.