You know the moment. You’re mid-session with your AI co-pilot, doing something for the second time, and you think: I did this exact thing last week.

Most people treat tool-building as a separate activity. You sit down, spec out requirements, plan an interface, write the code. That works for big things. But the tools I reach for every day weren’t born that way. They grew out of friction I noticed while doing real work.

The Carousel Compositor

Last week I needed to build a social media carousel for Threads — eight slides, each with a claymorphic character illustration and a headline text overlay. The first time, I did it by hand. Individual tool calls, manual placement, one slide at a time.

The second time, I said “just script this.”

Claude Code produced two files: carousel-build.sh to orchestrate the full build (copy template backgrounds, call the compositor, optimize with sips and jpegoptim), and carousel-composite.py built on Pillow for the actual text overlay — auto-font-sizing, semi-transparent dark panels, rounded rectangles, centered text.

The compositor auto-sizes font to fit two lines max. Headlines just work regardless of length.

Today I used those exact tools to build a nine-slide carousel — and extended the compositor to overlay screenshots too. The tools grew because the work grew. Nobody planned that feature. I needed it, mentioned it, and it was done in minutes.

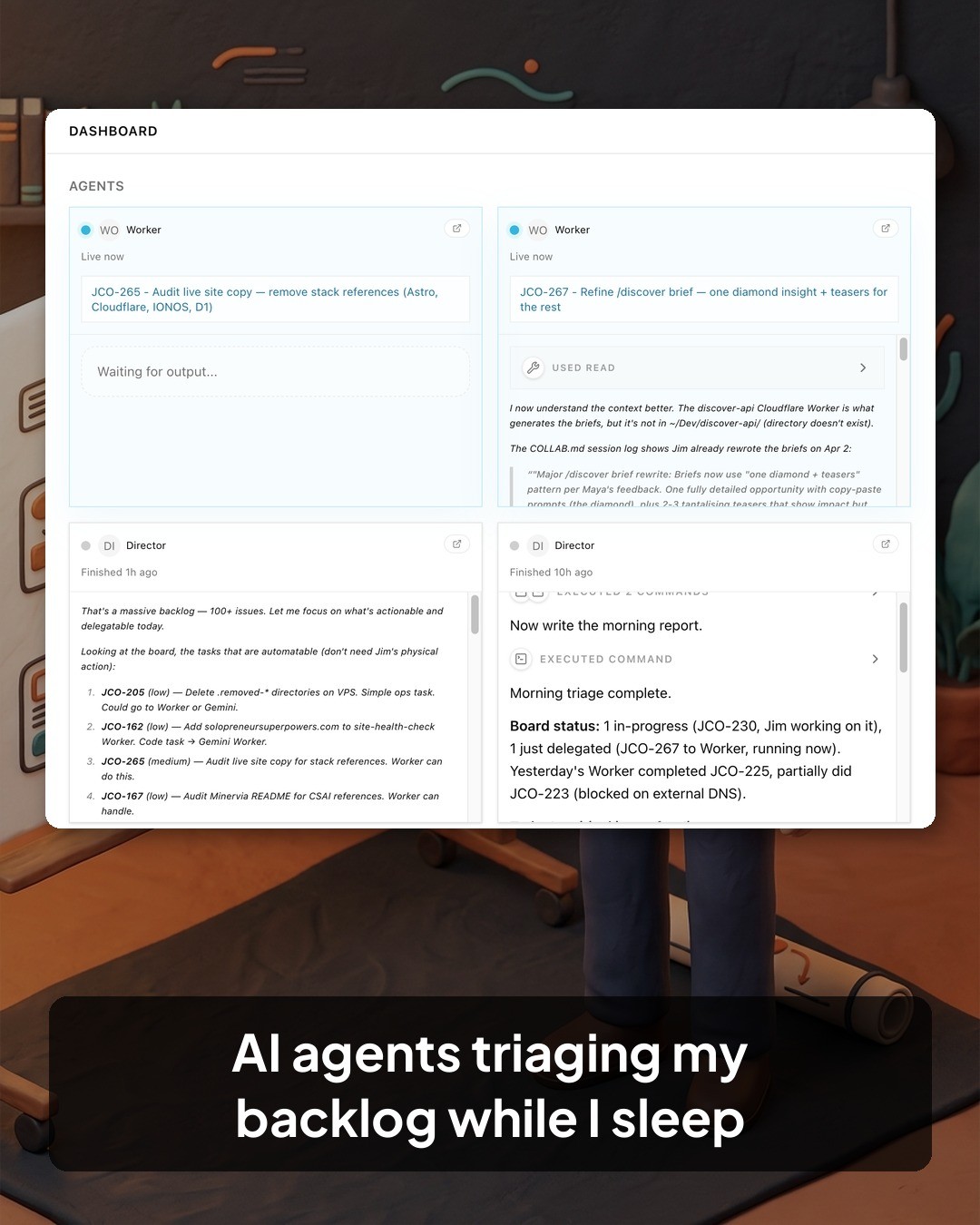

Here’s this week’s carousel, built entirely from the terminal — art generated, text composited, screenshots overlaid, queued to Buffer:

The Voice in the Terminal

I wanted Claude to talk back — actual voice feedback from the terminal. Not for novelty. For those moments when you’re staring at a build running and want a status update without reading a wall of text.

I researched Kokoro, an 82-million-parameter open-source TTS model (Apache 2.0 licensed). Three options: an MCP server, a CLI hook, or a FastAPI Docker service. I picked the CLI approach. An MCP server would load 350MB of model weights into RAM for the entire session, whether it spoke once or a hundred times. A CLI tool loads on demand.

The result: ~/bin/speak. One command, generates and plays audio, auto-cleans temp files. Cold start was around four seconds per utterance — acceptable but not great. So I added a warm HTTP server that keeps the model loaded. Cold start ~4s, warm server 0.4s. The speak command tries the warm server first, falls back to cold start if the server isn’t running.

None of this was in a backlog. It emerged from wanting to hear a response instead of reading one.

The Buffer Bridge

I needed to queue social posts from the terminal without leaving Claude Code. Copying text into a browser breaks the flow of a session where you’ve just written the content and generated the images.

So a Buffer integration skill got built — wrapping Buffer’s GraphQL API with curl (not Python’s urllib, which gets Cloudflare 403’d). It supports LinkedIn, Threads, and X with per-platform character limits. The pattern: write the JSON payload with Python, post with curl.

Today I used it to queue the nine-slide carousel. Nine images and a 448-character caption, one API call. The tool didn’t exist three weeks ago.

The Deeper Thing

There’s a pattern here, and it’s not “build more tools.” It’s this:

The first time, you do the work. The second time, you notice the friction. The third time, you say “just script this” and your AI builds a tool.

That third step is where an AI co-pilot flips the cost-benefit. Without one, “just script this” means stopping your actual work, switching to tool-building mode, and spending an hour on something that might not be worth it. With one, it means describing what you just did manually and watching the tool appear in your session.

The tools also compound. carousel-build.sh calls carousel-composite.py. The compositor now handles screenshots because today’s carousel needed them. The Buffer skill queues what the carousel script produces. Each tool gets a little better every time it’s used, because each use is a chance to say “and also do this.”

The fastest path to automation isn’t a backlog of tool ideas. It’s noticing when you repeat yourself and letting your AI turn the repetition into a command.

Next

- How do these ad-hoc tools age? Will I still use

speakin a month, or will it rot? - What’s the right threshold — is the second time too soon to script? Is the third time too late?

- The compositor grew a new feature today (screenshot overlays). At what point does organic growth need a deliberate refactor?